SMASH Mastering Scalable Whole-Body Skills for Humanoid Ping-Pong with Egocentric Vision

The future of robotics begins where the lab ends: in open-world interaction.

Dynamic Motion

100+ consecutive stable rallies

Methodology

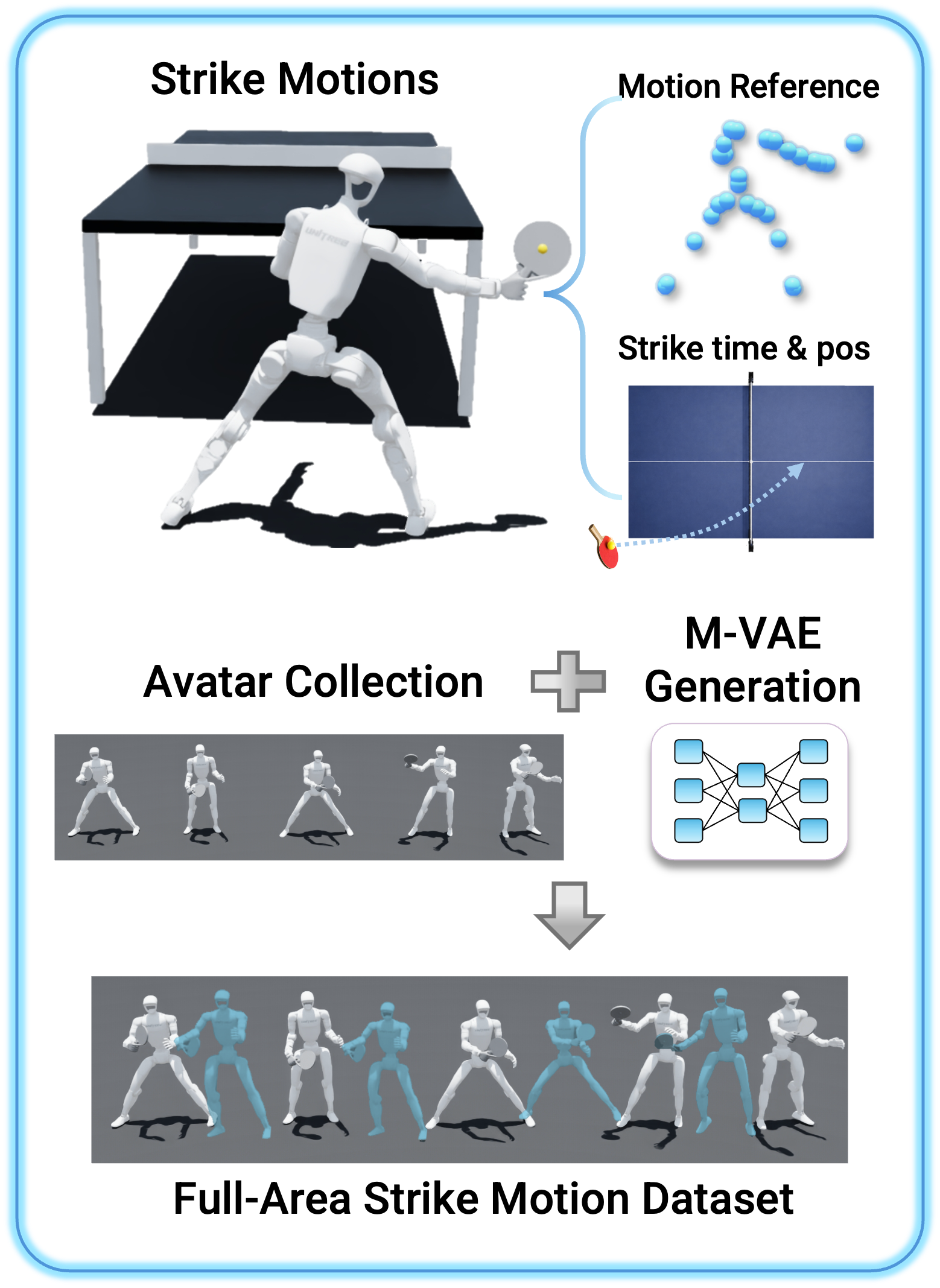

Data

We expand sparse motion-capture demonstrations with Motion-VAE generation to build a workspace-covering strike library, improving training efficiency by helping the policy learn task-aligned motions more effectively while reducing motion loss.

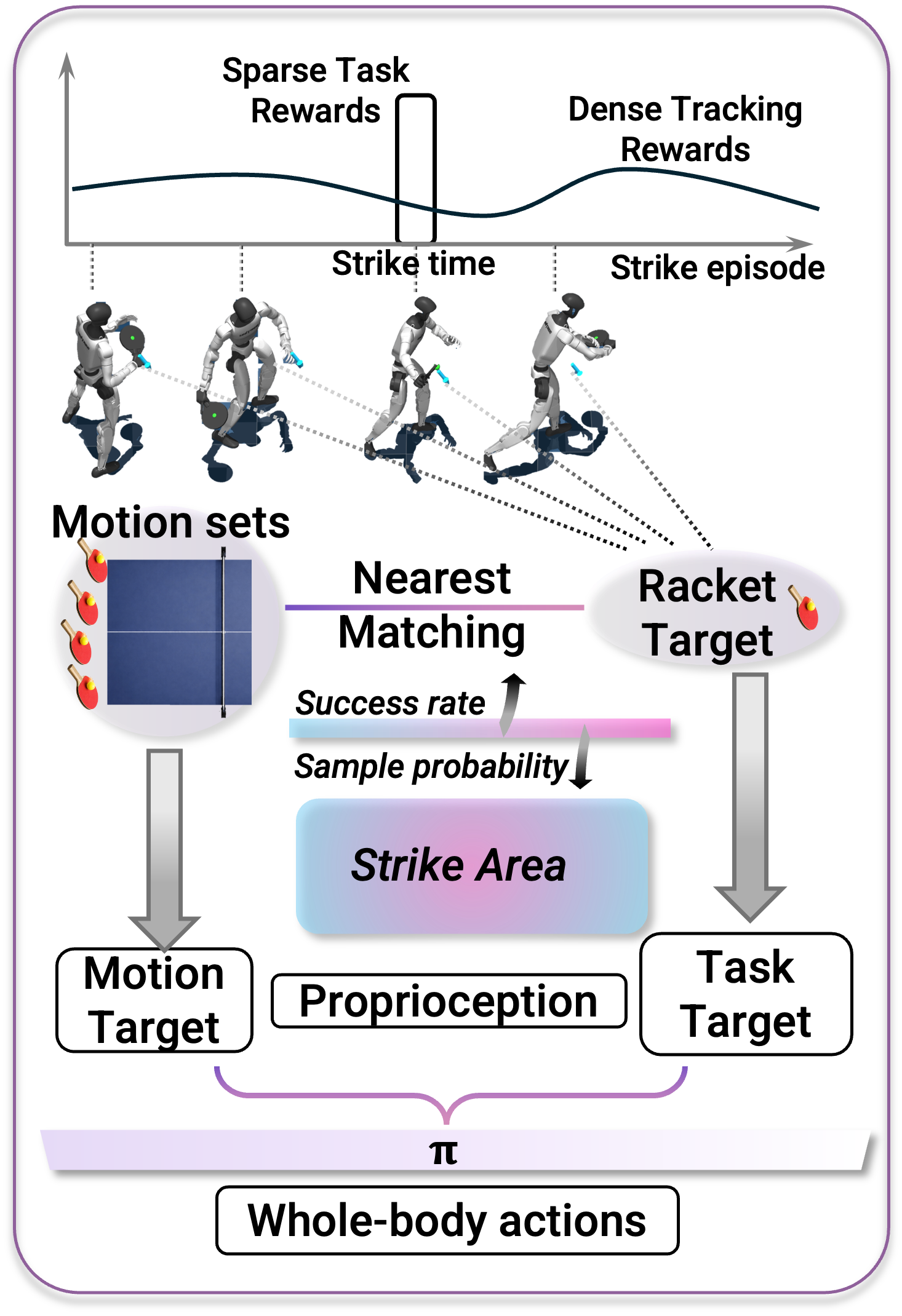

Policy

We train a task-aligned whole-body policy that matches motion priors to each desired strike target, achieving precise ball interaction while preserving natural coordination and enabling agile behaviors such as smashes and low crouching shots.

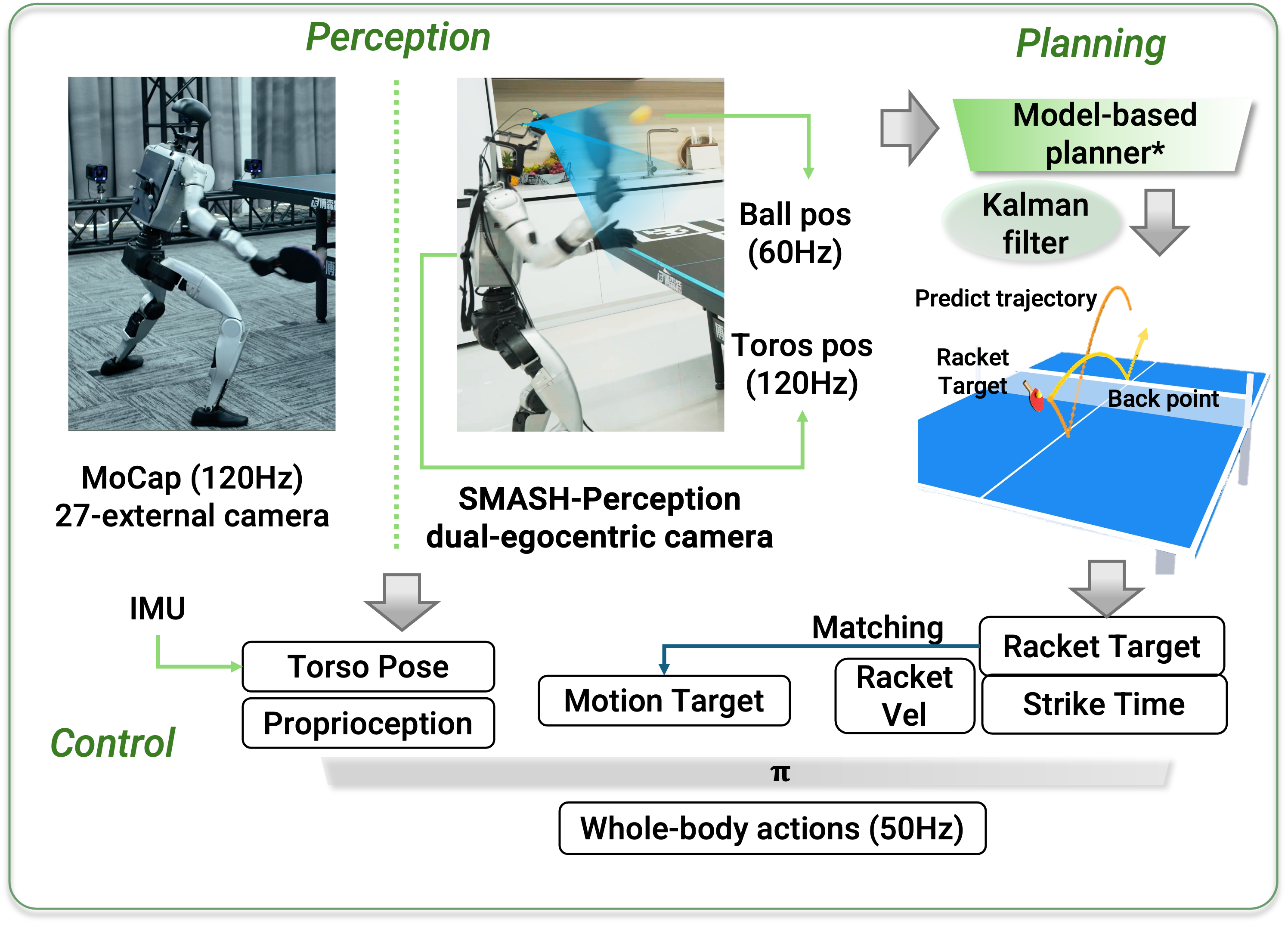

Deploy

We deploy the system with egocentric onboard perception that estimates both ball and robot state in real time, enabling the first outdoor humanoid table-tennis interaction without external cameras or motion-capture infrastructure.

Contributors

Junli Ren†, *, Yinghui Li†, *, Kai Zhang*, Penglin Fu*, and more to come...

† project lead * equal contribution

Acknowledgement

The work was conducted during the authors'intership at Kinetix AI, special thanks to Huige Tong, Yuxing Zhang, Zhihong Liao, Yue Jiang, Yifeng Huang, Lirui Zhao, Jialong Zeng, Shijia Peng, Jiazhi Yang, Chonghao Sima, Checheng Yu, and Modi Shi for their excellent support and insightful discussions.